Artificial intelligence is moving fast. People are using generative AI and large language models (LLMs) to build new services and perform existing tasks, and the underlying technology itself is advancing quickly. As the Nobel laureate economist Michael Spence observes, this wave of adoption could well yield significant productivity gains, after almost two decades of lackluster growth. Every day brings news like Google’s recent announcement that its AI has helped American Airlines reduce contrails by 54%, reducing each flight’s climate footprint.

But the news isn’t all good. As matters stand, AI is more likely to help Big Tech companies cement their dominance. They are the ones with the resources to develop and maintain the most powerful AI models, and they are already moving quickly to bundle LLMs with their existing services. These developments come at a time when antitrust authorities around the world are already growing increasingly concerned about tech companies’ market power.

To be sure, some commentators – including one Google engineer, in an internal memo – argue that this fear is overblown, owing to the presence of open-source LLMs that technically allow for anyone to compete in the market. But even if there is a blossoming of smaller new entrants, Big Tech’s dominance still looks secure. A recent paper comparing open-source models to the AI application programming interface (API) services that Big Tech companies are providing to third parties finds that the latter perform much better on most criteria.

Perhaps that will change. But, for now, the leading LLMs’ performance continues to improve with increased investment, and they may be approaching tipping points where they will be able to demonstrate new and unexpected capabilities. Deep pockets matter.

Given Big Tech’s sheer power in many countries, it is little wonder that policymakers are struggling to devise forceful, effective, and coherent responses. In some jurisdictions, policymakers and industry leaders are already locked in political stand-offs. For example, Meta (Facebook) recently blocked news links originating from Canada in response to the Canadian government’s requirement that platforms compensate news publishers. A similar spat occurred previously in Australia, where the government has since announced new plans to fine online platforms for abetting the spread of misinformation.

In the United Kingdom, a much-criticized “online safety bill” has led some tech companies to threaten to pull out of the market altogether. And in the United States, Congress has considered pro-competition interventions such as the proposed Open Markets Act, and newly activist antitrust authorities within the Biden administration have brought various suits against Google, Amazon, Meta, and Apple.

But while some policymakers do have deep knowledge about AI, their expertise tends to be narrow, and most other decision-makers simply do not understand the issue well enough to craft sensible policies. Owing to this relatively low knowledge base and the inevitable asymmetry of information between regulators and regulated, policy responses to specific issues are likely to remain inadequate, heavily influenced by lobbying, or highly contested.

So, what is to be done? Perhaps the best option is to pursue more of a principles-based policy. This approach has already gained momentum in the context of issues like misinformation and trolling, where many experts and advocates believe that Big Tech companies should have a general duty of care (meaning a default orientation toward caution and harm reduction).

In some countries, similar principles already apply to news broadcasters, who are obligated to pursue accuracy and maintain impartiality. Although enforcement in these domains can be challenging, the upshot is that we do already have a legal basis for eliciting less socially damaging behavior from technology providers.

When it comes to competition and market dominance, telecoms regulation offers a serviceable model with its principle of interoperability. People with competing service providers can still call each other because telecom companies are all required to adhere to common technical standards and reciprocity agreements. The same is true of ATMs: you may incur a fee, but you can still withdraw cash from a machine at any bank.

In the case of digital platforms, a lack of interoperability has generally been established by design, as a means of locking in users and creating “moats.” This is why policy discussions about improving data access and ensuring access to predictable APIs have failed to make any progress. But there is no technical reason why some interoperability could not be engineered back in. After all, Big Tech companies do not seem to have much trouble integrating the new services that they acquire when they take over competitors.

In the case of LLMs, interoperability probably could not apply at the level of the models themselves, since not even their creators understand their inner workings. However, it can and should apply to interactions between LLMs and other services, such as cloud platforms.

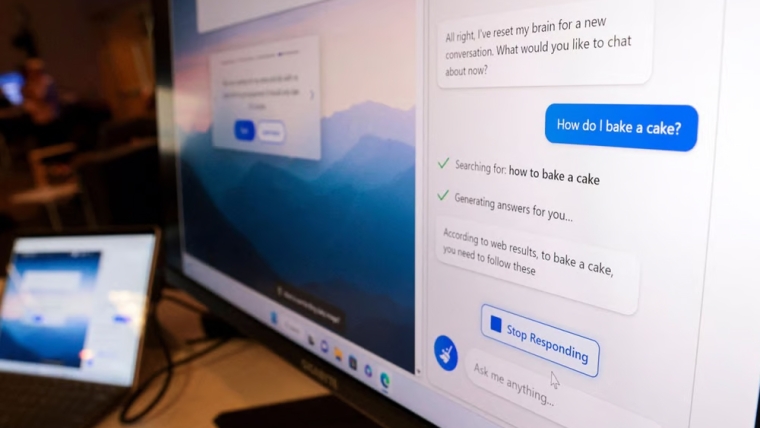

If I subscribe to Microsoft 365, for example, I should still be able to use Google’s PaLM2, rather than being forced also to use Microsoft’s Copilot or GPT-4 add-on. This principle was established back in the landmark 2001 antitrust decision against Microsoft’s bundling of Internet Explorer, and again in the 2007 verdict against its bundling of Windows Media Player.

A firm commitment to apply the same principles to LLMs would go a long way toward preventing further market concentration. If AI is going to deliver on its promise for society, it needs to be widely accessible to all and subject to the improvements that come with free and fair competition.

Diane Coyle, Professor of Public Policy at the University of Cambridge, is the author, most recently, of Cogs and Monsters: What Economics Is, and What It Should Be (Princeton University Press, 2021). This content is © Project Syndicate, 2023, and is here with permission.

1 Comments

A good article thank you.

I think the only thing I would add is that to commodify the benefits of AI we will need the large tech companies to make it yet another utility. General AI is generally useful to the wider public, once it is widely understood and widely used by corporates, the anti-trust aspects can be argued but by then first mover would have cemented their place. I think this is a fair trade-off to enable access for the masses and deliver the supposed productivity uplift hoped for.

Until AI gets so simple as to be installed like any other application it will require IT horsepower to drive it to specific use cases and so there will be a niche private-LLM industry until this too is absorbed by the Borg.

We welcome your comments below. If you are not already registered, please register to comment

Remember we welcome robust, respectful and insightful debate. We don't welcome abusive or defamatory comments and will de-register those repeatedly making such comments. Our current comment policy is here.