Today's Top 10 is a guest post from Tom Coupé. Originally from Belgium, he's an Associate Professor in the Economics and Finance Department at Canterbury University.

As always, we welcome your additions in the comments below or via email to david.chaston@interest.co.nz.

And if you're interested in contributing the occasional Top 10 yourself, contact gareth.vaughan@interest.co.nz.

See all previous Top 10s here.

An Artificial Intelligence Top 10

Artificial Intelligence (AI) is a hot topic these days, including in New Zealand. On March 28, NewZealand.AI and the AI Forum NZ, even organized an ‘AI day’, ‘the biggest AI event ever to be held in New Zealand’. In this Top 10, I cover 10 recent articles which should give you a good introduction on Artificial Intelligence, what it is, when to expect its full realization, its economic impact (or lack thereof), and also whether or not you should start worrying about your job. And as an extra: Mark Zuckerberg’s thrash as a source of data!

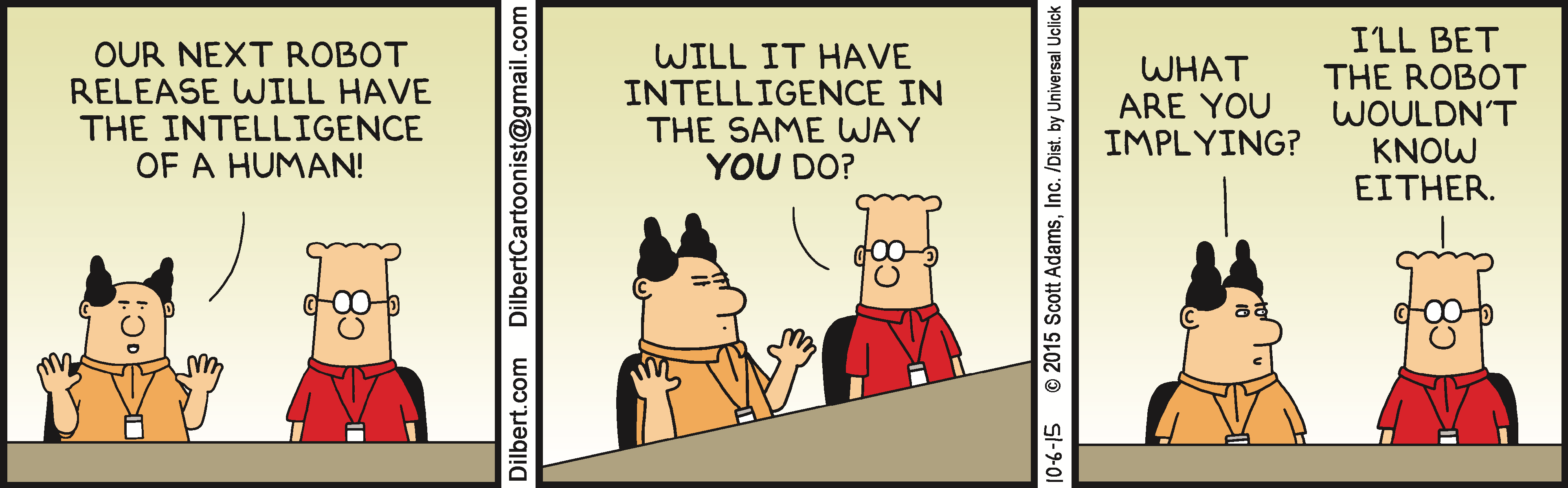

- AI definition.

As is often the case with hot topics (see my top 10 on Big Data for another example), different people define the hot topic, in this case ‘artificial intelligence’ in different ways. One article defines artificial intelligence as ‘the broader concept of machines being able to carry out tasks in a way that we would consider “smart”. Another article defines artificial Intelligence as a ‘technology that behaves intelligently using skills associated with human intelligence (e.g. ability to think, learn and act autonomously)”, before adding 23 other possible definitions.

- So how far are we from computers reaching human-like intelligence?

Since there is still no artificial intelligence that can foresee the future, in the mean time, one needs to ask 350 AI researchers to get the answer on the above question.

Researchers predict AI will outperform humans in many activities in the next ten years, such as translating languages (by 2024), writing high-school essays (by 2026), driving a truck (by 2027), working in retail (by 2031), writing a bestselling book (by 2049), and working as a surgeon (by 2053). Researchers believe there is a 50% chance of AI outperforming humans in all tasks in 45 years and of automating all human jobs in 120 years.

- Some examples.

Two prime example of the recent AI revolution are Google Translate and driverless cars.

A recent description of Google Translate’s AI breakthrough starts like this;

“Late one Friday night in early November, Jun Rekimoto, a distinguished professor of human-computer interaction at the University of Tokyo, was online preparing for a lecture when he began to notice some peculiar posts rolling in on social media. Apparently Google Translate, the company’s popular machine-translation service, had suddenly and almost immeasurably improved.”

Similarly, about self-driving cars;

When people think of self-driving cars, the image that usually comes to mind is a fully autonomous vehicle with no human drivers involved. The reality is more complicated: Not only are there different levels of automation for vehicles — cruise control is an early form — but artificial intelligence is also working inside the car to make a ride safer for the driver and passengers. AI even powers a technology that lets a car understand what the driver wants to do in a noisy environment: by reading lips.

- Not everybody is impressed though.

Despite all the success stories, there is still a long way to go. Indeed, the current success of AI still depends a lot on which specific measure of success one uses.

This is true for Google translate.

I’ve recently seen bar graphs made by technophiles that claim to represent the “quality” of translations done by humans and by computers, and these graphs depict the latest translation engines as being within striking distance of human-level translation. To me, however, such quantification of the unquantifiable reeks of pseudoscience, or, if you prefer, of nerds trying to mathematize things whose intangible, subtle, artistic nature eludes them. To my mind, Google Translate’s output today ranges all the way from excellent to grotesque, but I can’t quantify my feelings about it. Think of my first example involving “his” and “her” items. The idealess program got nearly all the words right, but despite that slight success, it totally missed the point. How, in such a case, should one “quantify” the quality of the job? The use of scientific-looking bar graphs to represent translation quality is simply an abuse of the external trappings of science.

And for self-driving cars.

“Much of the push toward self-driving cars has been underwritten by the hope that they will save lives by getting involved in fewer crashes with fewer injuries and deaths than human-driven cars. But so far, most comparisons between human drivers and automated vehicles have been at best uneven, and at worst, unfair.

Crash statistics for human-driven cars are compiled from all sorts of driving situations, and on all types of roads. This includes people driving through pouring rain, on dirt roads and climbing steep slopes in the snow. However, much of the data on self-driving cars’ safety comes from Western states of the U.S., often in good weather. Large amounts of the data have been recorded on unidirectional, multi-lane highways, where the most important tasks are staying in the car’s own lane and not getting too close to the vehicle ahead.”

- If AI is so transformative, why don’t we see it in the productivity statistics?

While AI is very popular these days, so far the economic impact of AI has been hard to find in the aggregate economic statistics, leading to another “productivity paradox”.

“We see the effects of transformative new technologies everywhere except in productivity statistics. Systems using artificial intelligence (AI) increasingly match or surpass human-level performance, driving great expectations and soaring stock prices. Yet measured productivity growth has declined by half over the past decade, and real income has stagnated since the late 1990s for a majority of Americans.

What can explain this paradox?

Specifically, we found four possible reasons for the clash between expectations and statistics: (1) false hopes, (2) mismeasurement, (3) concentrated distribution of gains, and (4) implementation lags. While a case can be made for each of these four explanations, implementation lags are probably the biggest contributor to the paradox. In particular, the most impressive capabilities of AI — those based on machine learning and deep neural networks — have not yet diffused widely”.

Interestingly, a similar argument is being made about the impact of big data on the economy (see my top 10 on Big Data).

- More general: beware about predictions about AI.

Humans have been predicting human-like computers already for many years. And so far have been wrong many times as documented by the below paper which looks at the accuracy of 95 past AI timeline predictions.

This paper, the first in a series analyzing AI predictions, focused on the reliability of AI timeline predictions (predicting the dates upon which “human-level” AI would be developed). These predictions are almost wholly grounded on expert judgment. The biases literature classified the types of tasks on which experts would have good performance, and AI timeline predictions have all the hallmarks of tasks on which they would perform badly.

This was borne out by the analysis of 95 timeline predictions in the database assembled by the Singularity Institute. There were strong indications therein that experts performed badly. Not only were expert predictions spread across a wide range and in strong disagreement with each other, but there was evidence that experts were systematically preferring a “15 to 25 years into the future” prediction. In this, they were indistinguishable from non-experts, and from past predictions that are known to have failed. There is thus no indication that experts brought any added value when it comes to estimating AI timelines. On the other hand, another theory—that experts were systematically predicting AI arrival just before the end of their own lifetime—was seen to be false in the data we have. There is thus strong grounds for dramatically increasing the uncertainty in any AI timeline prediction.

Consistent with this, historically, AI hypes (‘AI summers’) have been followed by AI winters.

- But we will all lose our jobs, right?

Not everybody is that uncertain though. One observer recently warned his readers that they will soon lose their jobs to robots:

“I want to tell you straight off what this story is about: Sometime in the next 40 years, robots are going to take your job. I don’t care what your job is. If you dig ditches, a robot will dig them better. If you’re a magazine writer, a robot will write your articles better. If you’re a doctor, IBM’s Watson will no longer “assist” you in finding the right diagnosis from its database of millions of case studies and journal articles. It will just be a better doctor than you.

And CEOs? Sorry. Robots will run companies better than you do. Artistic types? Robots will paint and write and sculpt better than you. Think you have social skills that no robot can match? Yes, they can. Within 20 years, maybe half of you will be out of jobs. A couple of decades after that, most of the rest of you will be out of jobs.”

- Fear of robots or bad management?

But surveys show that most people are much more optimistic. A recent Pew survey asked people what could be responsible for them losing their job. Interestingly, people are much more likely to be worried about other people taking their current job, or about poor management destroying their current job, than about robots stealing their current job.

- 26% of workers are concerned that they might lose their current jobs because the company they work for is poorly managed.

- 22% are concerned about losing their jobs because their overall industry is shrinking.

- 20% are concerned that their employer might find someone who is willing to do their jobs for less money.

- 13% are concerned that they won’t be able to keep up with the technical skills needed to stay competitive in their jobs.

- 11% are concerned that their employer might use machines or computer programs to replace human workers.

And as for the future, while 66% of respondents expect that, in 50 years, robots will do most of the work humans do now, only 20% expect their own job/profession will no longer exist.

Similar results can be found in this Gallup poll and this Eurobarometer poll.

It’s further good to know that according to a recent OECD report, workers in New Zealand are less at risk of automation. And if you want to check how risky it is that your job will be done by a machine, you can look here.

- But if we do lose our jobs, what then?

Many could be poor and bored. But it doesn’t have to be that way.

In the end, there's really two separate questions: there's an employment question, in which the fundamental question is can we find fulfilling ways to spend our time if robots take our jobs? And there’s an income question, can we find a stable and fair distribution of income? The answer to both will depend on not just how technology changes but how our institutions change in reaction to technological change. Do we embrace technology and increase funding for education, worker training, the arts, community service? Or do we allow inequality to continue to grow unchecked, pitting workers against those investing in robots? The challenge for society is to ensure that we solve both problems. That we help shape a society in which people can find fulfilling ways to spend their time. And to solve that problem we must also solve the separate problem of finding a stable and fair distribution of income.

More details about this topic can also be found in this Top 10 by Matt Nolan.

- Two extras. Not directly AI but related, since AI often relies on having lots of data...

If you think Cambridge Analytica had a decisive influence on the 2016 American election, better read this great ‘big-picture primer’ on the Cambridge Analytica case.

If you just want to know if this means Facebook got Trump elected: The answer is probably not, at least not because Cambridge Analytica made off with this data stash. Read The Verge’s critique of “psychographic profiling,” the data-mining buzzword that Cambridge Analytica claims allows it to target voters based on their personality traits (as opposed to traditional demographic targeting). After you understand the theory, read this 2017 investigation by The New York Times claiming that psychographic profiling wasn’t used in the Trump campaign and that it was ineffective when used by Ted Cruz’s team in his failed presidential bid.

And even trash can be data! A hilarious article about Mark Zuckerberg's trash.

After four years of stalling, I finally decided to go ahead with the latter idea. My quarter-baked plan was this: I’d drive to his Mission District pied-à-terre on trash collection day, snatch a few bags of whatever, and dig through it. I could learn more about Mark Zuckerberg’s habits and interests, creating my own ad profile of him. Then I could sell this information to brands looking to target that coveted "male, 18-34, billionaire” demographic. Think of it as a physical version of Facebook’s business model.

11 Comments

AI will be a topic of debate for some time to come, but personally i do not believe it will ever be able to fully emulate human thought. Humans so often demonstrate themselves to be largely irrational in so many things. For example i read a synopsis of the most successful shares in the world by James Cornell (author of Stock Market news letter) who identified Philip Morris (tobacco) as being it. Appreciating something like 71,000% since 1983, but is also one of the most hated companies in the world.

AI will always be fairly successful within a logic system, such as manufacturing, or even transportation where specific precision is the goal. But humans are just too emotional for a machine to ever be able to fully emulate them. Disagree? Think about your wife, husband,or partner and consider whether you fully understand and can rationalise everything they have done or said, or believe.

It is not just Humans who have irrationality. Most of the programs I wrote in a lifetime of coding had irrationality and the users were good at pointing them out.

We already have children who spend 95% of their free time interacting with a screen and maybe 5% with other humans. It is easy to imagine a future when instead of searching for a human partner in life we will marry a robot - programmed to present just the right degree of stimulation at the times you need it rather than like my own beloved who usually gives me the messages I need but when I would prefer to be reading.

"It is not just Humans who have irrationality. Most of the programs I wrote in a lifetime of coding had irrationality and the users were good at pointing them out." I know exactly what you mean, been there too!

What is AI?

If you look at most take-over-the-world AI robots/systems in the movies they are a combination of

- intelligence (they can make complex abstract calculations very fast)

- self awareness/sentience (They know of and perceive themselves)

- free will (They are not constrained by programming and can make their own choices)

The reality is that most AI is constrained by programming to a very specific task.

For example chat bots can learn how to better interpret a question.

They learn the grammatical nuances to ensure they can match the question to those already in their programming.

But they can only provide an answer they already have.

They can't learn/calculate new answers by themselves,

"But they can only provide an answer they already have. They can't learn/calculate new answers by themselves"

Actually....I'd say they can. They fact the take data from 'experience' then use that information to provide and answer or make a decision is learning. They are not relying on data already having been programmed in to them to achieve this, instead they obtain this data for themselves. They same way we obtain information over the course of our lives to make choices etc.

https://deepmind.com/blog/deep-reinforcement-learning/

The recent documentary 'Do you trust this computer' was a bit dramatic but did explore some pretty interesting areas as well as highlighted some pretty impressive milestones that AI has already achieved.

Yes, "fuzzy Logic" was a term developed to help develop a "learning algorithm". To a limited extent computers will be able to seek out new data and learn. But human irrationality will always set us apart.

Interesting, they are indeed learning some things.

A good top ten. I was a AI doubter until the recent improvements in face recognition and language translation.

A useful comparison to long gestation of AI is the development of the railways. That took a couple of centuries to develop boilers that didn't explode, efficiency so it could carry its fuel and lightness so the rails didn't snap. When they did arrive it was quietly in 1825 Stockton Darlington and then with massive publicity 5 years later at the Manchester Liverpool line. Did the first passengers in 1830 expect rails crossing the USA and India within their lifetimes and speeds faster than the fastest animal? Did they realise the world would change so dramatically and so quickly? Did they anticipate simple things like having standard time or quirks like the first professional sporting teams or fresh food in cities? How many young men started training to be farriers while railway lines were being laid?

My pick: avoid starting a degree in law or accountancy; those professions will remain but most of their tasks will simply be automated. Don't buy an expensive bus or truck unless you can write off the costs with maybe five years - unmanned vehicles will arrive.

It is expect the unexpected.

Yeah, agree, it's very interest how quickly some AI models have improved. People can fall into the trap of thinking of current performance at a task as a limit of some sort, or representative of future performance.

There's a good reason so many AI services are free or low cost: the more data thrown at them the better they become. As with language or facial recognition, so with self-driving. Practice makes improvement, and with incredible rapidity.

Tomorrow's AI will be like Yesterday's Electricity.

We just do not believe it Today.

Enjoyable and stimulating top ten.

Item 4 - the author doesn't recognise that Google Translate will accelerate the loss of nuance in human communications. Those subtleties of language he/she illustrates are already being lost as more and more people think in slogans. Google Translate will improve and human speech will deteriorate, until they eventually converge.

Item 7 - Love the uncompromising tone of this article but have a couple of issues with it. The first is that autonomous driving and translating are fairly sophisticated forms of AI. Surely we would have seen some impact on employment from the more basic forms of AI by now? The unemployment statistics say otherwise and the participation rate in plenty of countries outside the US hasn't deteriorated (eg NZ & Aus).

Secondly, The right seems to offer the best solution to AI enforced unemployment. Automate the public sector, especially politicians, and use the savings to buy everyone shares in the private companies that will drive the future. Loss of status will be less severe when everyone is an owner, than it will be by putting large numbers of people on a benefit disguised under a fancy name like UBI.

We welcome your comments below. If you are not already registered, please register to comment

Remember we welcome robust, respectful and insightful debate. We don't welcome abusive or defamatory comments and will de-register those repeatedly making such comments. Our current comment policy is here.