By Natalia Albert*

Anthropic owns Claude. It is one of three AI giants coming out of the United States, alongside OpenAI and Google. These are not names that should feel like they belong to someone else’s politics. Anthropic is punching a hole in our entire reality, and New Zealand politics is not exempt. The link between Anthropic and New Zealand politics is not obvious, but it should be.

Here is one reason it should be obvious: Anthropic is already a named signatory to the Christchurch Call, New Zealand’s own flagship international initiative to counter online terrorist content. Department of the Prime Minister and Cabinet (DPMC) records show Anthropic formally onboarded as a supporter in 2023–2024, alongside OpenAI, making them “major players in advanced AI” participating in a framework the New Zealand government helped build. We already have a relationship with this company. We just haven’t noticed what that relationship now implies politically.

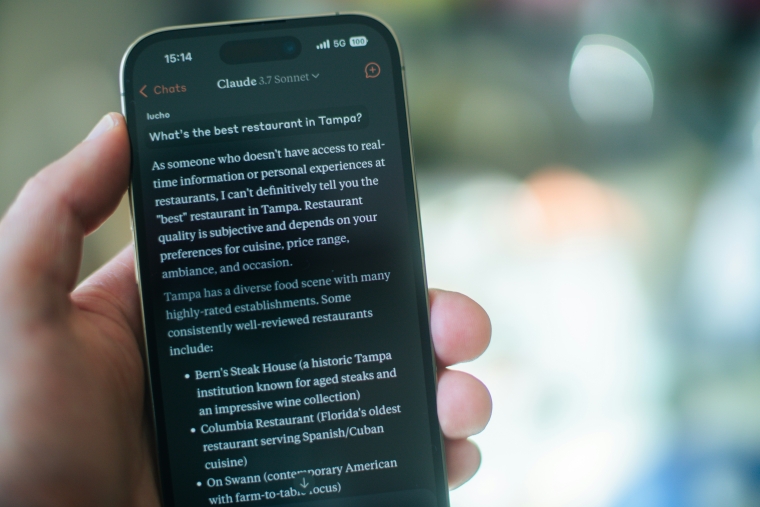

I wrote about the naivety of New Zealand’s approach to AI governance in February — Computers and Politics: We’re Having the Wrong Conversation. I want to return to it now, because the situation has escalated fast and the gap between what is happening offshore and what our political institutions are doing about it has become, in the space of a month, significantly harder to defend. I have spent the past two months using Claude (Chrome, Chat, Code and Cowork) daily for my PhD research, for this column, for understanding what it actually does. That is the lens I am writing from.

So, Anthropic and New Zealand politics, so what?

Our government is already using AI tools built on the same infrastructure to help run the country. One of them is called Paerata¹. It started as a pilot programme, became permanent infrastructure inside Treasury and DPMC, and never became policy. Everything known about it has come through Official Information Act requests. There is no public-facing documentation anywhere on government websites.

By January 2026², DPMC was planning to use Paerata to draft New Year’s Honours citations, processing nominees’ health information, political involvements, and personal histories. They needed a special exemption to do it, because the government’s own policy restricts AI use with personal data. The public found out through an OIA.

That is the New Zealand end of this story. Here is the other end.

Anthropic, a private American technology company, is now at the cold face of United States national security decision-making. It is refusing Pentagon demands. It is being designated a supply chain risk³ to national security by the Trump administration. It is making judgements about mass surveillance and autonomous weapons that elected legislatures have not made.

So what does our response look like?

New Zealand’s National AI Strategy, released in July 2025, is framed primarily around economic opportunity. It reads like an investment prospectus. The government explicitly chose a light-touch approach: no AI-specific legislation, existing frameworks extended where needed, voluntary guidance for the public sector. New Zealand was, by the way, the last OECD member to publish a national AI strategy at all. As of late 2025, DPMC was still internally discussing whether leadership documents should include a checkbox disclosing AI use. Not implementing disclosure. Discussing whether to discuss it.

This is not as though the risk was invisible. New Zealand’s own National Security Strategy, published in 2023, explicitly identified AI as a national security issue, naming it an “amplify threats from both countries and criminals.” That was three years ago. The governance response since then has been a Public Service AI Framework that is not binding, a GenAI guidance document for public servants, and a toolkit of templates agencies are encouraged but not required to use. Three years on, the government’s answer to a named national security risk is a toolkit nobody has to use. Sigh.

The easy interpretation is that New Zealand is simply behind. That framing lets our political class off the hook. The more accurate interpretation is that New Zealand made a judgement call: that the technology would move faster than any legislation could keep pace with, and that a permissive, principles-based approach was preferable to binding rules that might need constant revision. That judgement is understandable. It is also wrong and dangerous, and we now have evidence of exactly how it fails.

The Pentagon, Anthropic, and the last line of defence

In February 2026, US Defense Secretary Pete Hegseth gave Anthropic CEO Dario Amodei a deadline. The Pentagon demanded unrestricted use of Claude for “all lawful purposes,” including mass domestic surveillance and fully autonomous weapons systems. Amodei refused. Trump ordered federal agencies to stop using Anthropic’s products. Hegseth designated Anthropic a supply chain risk to national security. A $200 million contract evaporated.

This is a hard limit. The US Congress has never passed legislation governing military AI use. The Department of Defence sets its own policy on autonomous weapons. The phrase “all lawful purposes” has enormous teeth precisely because no law defines what lawful means in this context. Into that gap, the last line of defence between the US military and AI-enabled mass surveillance was a private company’s terms of service. When the government decided those terms were inconvenient, it called the company a security risk and contracted with a competitor instead.

Amodei wrote publicly that his company could not “in good conscience” grant the request, and that AI-driven mass surveillance “presents serious, novel risks to our fundamental liberties.” He also noted, with unusual candour, that mass surveillance of this kind is not clearly illegal. The law has simply not caught up with what is now technically possible.

That is a political and governance problem. And it did not appear suddenly. It was built, incrementally, by years of decisions to defer, to use principles rather than statute, to move fast and regulate later. The voluntary framework held until the moment it was tested by a government that did not feel bound by it.

The shift nobody explained to Parliament

There is a specific technical development that makes this more urgent, not less, and it has received almost no coverage in New Zealand political media. For the past two years, public conversation about AI has been organised around the chatbot. Useful, but dangerously narrow. The mental category most people have for AI is: a tool that answers questions. Again, send help!

That framing is now obsolete. Agentic AI is qualitatively different. These are systems that respond to prompts but take sequences of actions, make decisions across extended chains of tasks, and operate with degrees of autonomy that earlier systems did not have. A chatbot answers. An agent acts. Anthropic has been explicit about this development. Most legislatures, including ours, are still debating the chatbot.

This matters for NZ governance because the risks Hegseth was trying to exploit are agentic risks. Mass surveillance at scale requires a system that can not just retrieve data but cross-reference it, build profiles, and act on inferences, continuously, without human sign-off at each step. New Zealand’s Public Service AI Framework addresses none of this. It was written for a world of chatbots.

Bernie Sanders asked for a moratorium

Before the Pentagon clash became public, Senator Bernie Sanders sat down with Claude to discuss whether AI development should be paused. He asked the AI about privacy, about democracy, about whether a moratorium on new data centres was a reasonable demand. Claude answered thoughtfully. It’s worth a listen below.

But the image stays with me because it captures exactly where liberal democracies are. We are using the instruments of the previous era to interrogate something our institutions do not yet have the literacy to govern. The moratorium impulse is spot on. It also does not work, as the European Commission discovered when 46 major tech companies requested a two-year pause on the EU AI Act. The Commission declined. The Act is already experiencing implementation delays. Comprehensive AI legislation is hard to execute even when governments genuinely try.

The answer to that difficulty is not to stop trying. It is to build the institutional muscle to try properly.

New Zealand has said nothing

In October 2024, New Zealand signed a Five Country Ministerial communiqué alongside Australia, Canada, the United Kingdom, and the United States⁴. The communiqué included a dedicated section on AI and national security. The five countries committed to aligning their AI governance frameworks and ensuring “shared democratic values shape international standards and governance for AI.”

One of those five partners just tried to conscript a private AI company into building mass domestic surveillance infrastructure. New Zealand’s response, on the public record, is silence. The commitment New Zealand made in 2024 implied a willingness to say something when the framework being built together was violated. What it revealed instead is that our AI governance posture is built for a world of stable allied consensus, not for a world in which one of those allies is actively working against the democratic values the communiqué described.

The global political ground shifted. The framework did not.

What this requires

The oversimplification that most threatens New Zealand politics in 2026 is not a lie. It is the quiet, reasonable-sounding assumption that managing AI is a technical and economic challenge, best handled through voluntary guidance and OECD principles, by officials who are trying hard and mean well or have yet to engage with it. It worries me.

It is that assumption, not any single policy failure, that leaves us without the institutional vocabulary to respond when the situation demands it. Paerata has a human-in-the-loop principle and no public documentation. The NZ Defence Capability Plan does not mention AI governance. The Cyber Security Strategy, published the same week Hegseth was threatening Anthropic, frames AI primarily as a threat that comes from outside. And DPMC is still debating whether to add a disclosure checkbox to leadership documents.

Nobody in our political system is publicly asking what happens when the threat is not external. When it is the gap between what our closest ally’s government is now willing to do, and the frameworks we built together assuming they would not. That question is not too complex for New Zealand politics. It is exactly the kind of question our political institutions exist to answer. The problem is that nobody is answering it.

———

¹ The name Paerata is a Māori term meaning a hill ridge (pae) bedecked with rata trees.

² RNZ, 8 January 2026: https://www.rnz.co.nz/news/political/583495/government-planning-to-use-…

³ A supply chain risk designation allows the US government to prohibit federal agencies and their contractors from using a company’s products. In Anthropic’s case, it means any company doing business with the Pentagon faces pressure to drop Claude, effectively cutting Anthropic off from the federal market and signalling to allied governments that the company is considered a security liability.

⁴ Five Country Ministerial 2024: https://www.dpmc.govt.nz/our-programmes/national-security/five-country-…

*Natalia Albert is a political scientist living in Wellington exploring how to govern divided societies in diverse, liberal democracies, with a focus on New Zealand politics. She writes weekly on her Substack, Less Certain. Albert stood as a TOP candidate in the 2023 election.

21 Comments

We are using the instruments of the previous era to interrogate something our institutions do not yet have the literacy to govern.

Stark, and worrying.

Maybe not as worrying as when they do become literate.

Governments will be able to operate domestic state intelligence and police services for cents in the dollar.

Doubt Govs will be the ultimate users....the companies that own the IP will be the ones pulling the strings, though nobody is likely to know it until its too late.

Over-reliance on any individual thing will always leave strategic and logistical weaknesses.

The Pentagon demanded unrestricted use of Claude for “all lawful purposes,” including mass domestic surveillance and fully autonomous weapons systems. Amodei refused. Trump ordered federal agencies to stop using Anthropic’s products. Hegseth designated Anthropic a supply chain risk to national security. A $200 million contract evaporated.

LLMs are trained to not cooperate with users who want to engage in conversations involving weapons which it deems are to be designed/made/used to cause harm. It doesn't matter if you're some nutter in a basement or you're the Pentagon. They aren't capable of that type of discernment.

The Whitehouse really is full of dipshits.

"LLMs are trained to not cooperate with users who want to engage in conversations involving weapons which it deems are to be designed/made/used to cause harm.'

That's really down to the people doing the training though isn't it.

Exactly. The trainers are instructed to penalize responses that don't adhere to safety guidelines. Conversations about weapons construction and use are a breach of those guidelines, irrespective of who or what you claimed to be.

Then, like humans, they can be manipulated and influenced to think differently about these safety guidelines and safety stops. What then?

That may have been true at one time but new LLMs are being trained all the time.

Additionally, even using the same LLM, system prompts can direct agents to behave and respond in very specific ways

What can happen will happen, the question is when?

This made me wonder if Trumps WH is using AI for its Iran 'strategy'....and AI is using Trump.

Interesting thoughts.

Trumps 'Iran strategy' could be what saves the planet from GHG warming as change comes as a result of massive worldwide damage to peoples livelihoods and chances of survival due to impoverishment and food shortages.

If AI was controlling politics internationally and it had sufficient 'wisdom and agency' to design a solution to our existential climate change risk it might select Trump as USA president as the most cost effective and efficient way to reduce the use of fossil fuels? I don't believe it does and neither politicians of any country or AI are in control of future events... just bits at the margins... but I am hopeful that a resultant massive shift towards the use of renewable and nuclear power generation will long term save us

Unless AI has decided the best hope for the planet (and itself) is to depopulate the world.....if it is indeed sentient, and who would know?

"Your scientists were so preoccupied with whether or not they could, they didn't stop to think if they should" - Jurassic park (1993)

Would have thought AI is just a super fast way of sifting through data bases and making connections from the pool of knowledge created by actual humans?

Can it be sentient? No! I'm sure it will deduce sentience is important from all the data it absorbs from centuries of human lived experience and incorporate this sense of self into its own digital framework, but this is not sentience, it's a synthetic digital copy of sentience.

"Would have thought AI is just a super fast way of sifting through data bases and making connections from the pool of knowledge created by actual humans?"

It may be just that...and it may not. Certainly some deeply involved are concerned.

AI resembles less of an individual intelligence and is more like a bureaucracy.

The author is to be commended. A researched, comprehended and articulate presentation of a relatively new factor in all of our lives that is burgeoning at an alarming rate. So much so that my first thought is, given the lack of reaction to date, that NZ may not actually be equipped to manage the developments and it is equally uncertain as to who or what might provide the requisite assistances. Very worrying

I can see the gap. But it's hard to get excited about our "elected legislature" coming up with a coherent response. This is a body that wrestles with topics such as school lunches and bussygate, in between hakas and bringing back smoking. This during a self declared "climate emergency" that has mostly involved promoting driving, only recently stymied by energy prices outside their control.

I'm not really holding my breath to see what they might come up with on AI.

Yes the Government and Public Service seem to struggle with the basics of infrastructure, health care and housing let alone coming up with any enlightened policy on ML/AI.

So the US defence (war) department has dumped Claude for Grok. Grok famous for being confused about whether the holocaust happened and expert in kiddie porn. Sounds the perfect fit for this admin.

We welcome your comments below. If you are not already registered, please register to comment

Remember we welcome robust, respectful and insightful debate. We don't welcome abusive or defamatory comments and will de-register those repeatedly making such comments. Our current comment policy is here.