The January 2018 Raving highlighted that the ANZ business survey went AWOL after the 2017 election.

In general the ANZ survey is still corrupted by some respondents using it as a political football, but by now I thought the media and ANZ's economists would have started to question its usefulness as an indicator of near-term economic prospects rather than largely interpret it at face value.

ANZ's relative new chief economist has inherited a survey that her predecessor largely didn't question, making it hard for her to highlight its shortcomings.

But of concern, some questionable analysis is now being used to make the survey appear more useful than it is; prompting me to revisit the survey. This Raving shows the shortcomings of some but not all of the components of the survey so readers can be more informed about its usefulness.

The ANZ business survey has become a political toy for some of the respondents

The latest ANZ business survey is out and the news isn't good. There was once a time the ANZ business survey provided useful insights into near-term economic prospects, but in general that stopped being the case in 2002, although with some exceptions.

For over 15 years the survey has been corrupted by political gamesmanship by some respondents but it still largely gets reported at face value by the media. Some bank economists point out shortcomings, like Westpac's Michael Gordon when he wrote; "The ANZ business confidence survey has greatly overstated the extent of the slowdown in growth over the last year or so"; but he didn’t go far enough.

It is time to put the ANZ business survey under the microscope. Michael referred to the ANZ business confidence survey that has been corrupted by some respondents using it to give the finger to the now, not so new government.

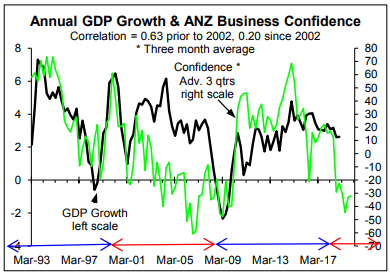

Prior to the Clark Labour Government introducing some business unfriendly policies in 2002, the ANZ business confidence survey was a useful leading indicator of GDP growth. Adding to its usefulness the peak correlation was with it leading by three quarters. However, after 2002 until National came to power the survey massively understated near-term economic growth prospects as can be seen in much of the period highlighted by the left hand red arrowed line in the chart below.

Once National came back to power the business confidence survey started to overstate near-term growth prospects (second blue-arrowed line). Now Labour is back in power the survey suggests we are heading into a deep recession.

The tumble in the survey in mid-2015, that is shown in early 2016 in the previous chart because the survey has been advanced by three quarters, is an interesting case study. At the time RBNZ Governor Graeme Wheeler said he was looking for evidence the large fall in dairy farm incomes was going to have a negative impact on economic growth; to justify OCR cuts. It appears some respondents to the survey gave the governor his "evidence". Is history repeating?!

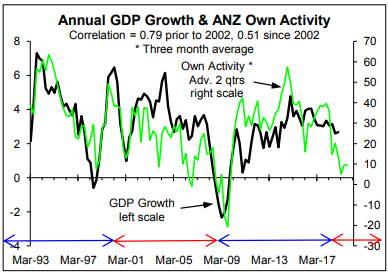

The survey of firms own activity provides superior insights although it has still been corrupted by some of the respondents playing political games (next chart). It was too pessimistic during much of the previous Labour Government's term, too optimistic during much of the last National Government's term, exhibited the Wheeler-inspired temporary fall in mid-2015, and has been overly pessimistic since the Labour Coalition Government was announced. It is currently corrupted to the extent I question whether it provides any useful insights.

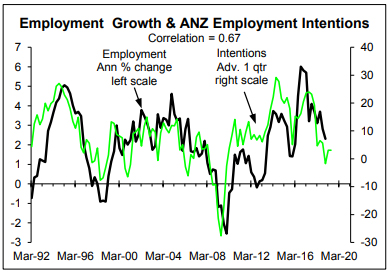

However, some components of the ANZ business survey are still somewhat useful (chart below).

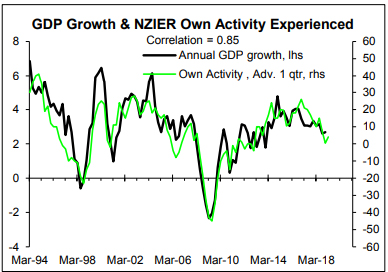

Looking at how some other business surveys have behaved as leading indicators of growth confirms that the ANZ business survey in general has been hugely corrupted by political gamesmanship. The NZIER own activity experienced survey shows no clear signs of political corruption (next chart). It has had moments, like during 2017 when it was overly optimistic, but it has largely stood the test of time. The correlation over the full period shown in chart below is 0.85 based on the survey advanced or leading by one quarter, while it was 0.80 up to 2002 and 0.90 after 2002.

To be useful a survey has to have a reasonably stable relationship with the thing of interest over a reasonably protracted period of time. The NZIER survey rates quite well in this respect while the ANZ business confidence survey and to a lesser extent own activity survey don't.

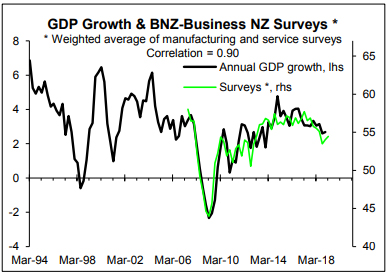

The BNZ-Business NZ manufacturing and service sector surveys are newcomers but a weighted average, giving more weight to the service sector survey, is a quite useful "leading" indicator of annual GDP growth (next chart). The correlation is high at 0.9 compared to a maximum possible 1.0. However, the peak correlation is coincidental rather than with the survey leading (i.e. it is a barometer of economic growth not a leading indicator).

As an aside, the NZIER and BNZ-Business NZ surveys are showing a hint of improving although maybe when the next results are released they will have deteriorated somewhat.

Why has the ANZ business survey been corrupted by political gamesmanship when the NZIER and BNZ-Business NZ surveys don't appear to be? The surveys will have some overlap in terms of the respondents but probably not lots. At face value this points to ANZ's business clients on average being more "political" than other groups of businesses.

Instead, I suspect the ANZ business survey has become a victim of its own success. It has got lots of media coverage over many years making it an ideal vehicle for some respondents to use to send a political message. Long may it last that the NZIER and BNZ-Business NZ surveys aren't corrupted by political gamesmanship by some respondents.

ANZ economist uses misleading charts to try and make the survey look relevant

I have sympathy for Sharon Zollner, ANZ's chief economist. Her forerunners never did what I think they should have done (i.e. identified which of the components of the survey have been corrupted by political bias and which are still of use). But of concern is the low quality analysis used to try and make the survey look more relevant than it is.

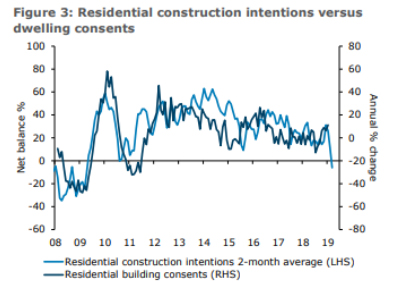

Sharon provided three reasons why the sharp fall in the residential builder survey could be discounted but largely portrayed it at face value while the chart below was used to put the survey in context of an indicator of residential building.

It is good to see the survey put in context, but the chart above and the related commentary have a few shortcomings; sufficient to warrant investigating the relationship the chart portrays.

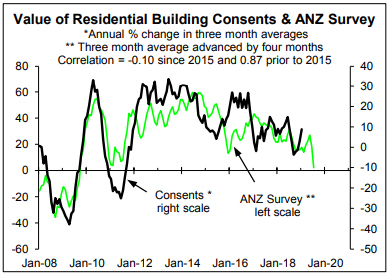

In wasn't clear the measure of residential building consents used in the previous chart. The chart below replicates the previous one best I can and provides a similar picture. While the correlation between the ANZ survey of residential builders and growth in the total value of residential building consents is high at 0.87 prior to 2015, since 2015 it is -0.1. The survey hasn't been a reliable leading indicator in recent years. The chart above and the related commentary in the ANZ report provided no insights into whether the survey led consents or how reliable it was over time.

One of the reasons for the latest fall in the ANZ survey of residential builders may be some of the respondents using it, like the business confidence and own activity components, to give RBNZ Governor Adrian Orr "evidence" to justify OCR cuts. Interestingly, this survey also fell in mid-2015 when Governor Wheeler was fishing for justification for OCR cuts. This issue should have been investigated but wasn't.

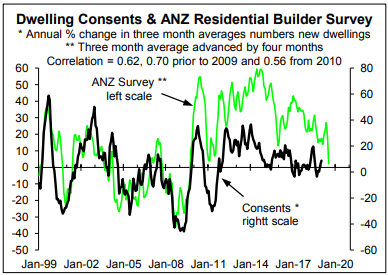

A trick used by economists and others is to select the period that tells the story they want to tell. The chart below is similar to the previous one but covers a longer period. It shows a major change in the relationship after 2008 that probably explains why the chart in the ANZ report only started in 2008; a way of avoiding a problem with the survey.

The previous chart uses the number of new dwelling consents as the measure of residential building rather than the value of consents. The ANZ survey should be an indicator of activity or volume not value that can be impacted by price changes as well as volume changes. However, it is quite understandable why the chart in the ANZ report starts in 2008. It avoids having to explain why the survey mysteriously did a stepwise change in 2009. Given the stepwise change there is some justification in recalibrating starting in 2008. But I am always wary when I see a chart starting at an arbitrary date when a longer period could have been used and is useful to put things like surveys in the proper context.

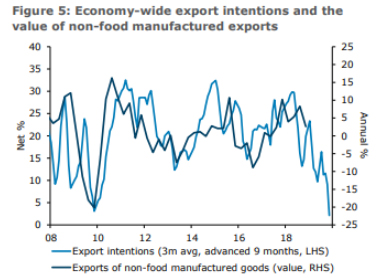

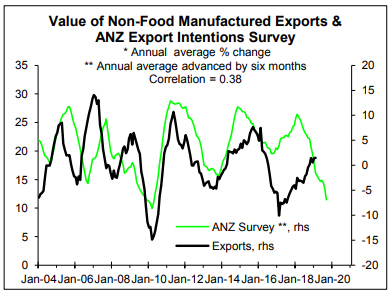

It is a similar story with the chart below taken from the ANZ report that shows the relationship between the survey of all exporters' intentions advanced by nine months and the annual % change in the value of non-food manufactured exports. Again, as best I can the bottom chart replicates the one below but it is extended back as far as I can based on the data available from Statistics NZ. Since 2004 there is only a 0.38 correlation between the two based on the ANZ survey advanced by six months.

Comparing the previous two charts highlights my concern about selective use of periods. If a leading indicator is useful to the extent people should take it seriously it should stand the test of time. Clearly the ANZ survey of exporters' intentions doesn't (e.g. the correlation prior to 2008 is -0.27).

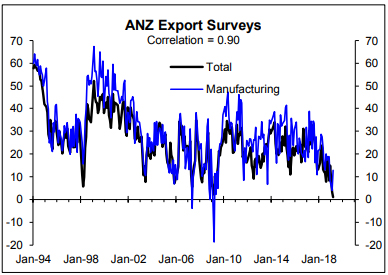

A minor criticism is that in looking at manufactured exports the manufacturing intentions component of the ANZ survey should have been used. This oversight isn't large in the context of the quite high correlation between the survey of all exporters and the manufacturing one although they did behave differently in March (next chart).

In the ANZ report Zollner writes: "Meanwhile, a further drop in export intentions takes it to levels lower than during the Asian Financial Crisis of 1998-9 and the Global Financial Crisis of 2008-9. This doesn't square with anecdotes and it is hard to know what to make of it."

The answer is simple: many components of the ANZ survey are corrupted by political games. The next two charts are useful in trying to quantify the political bias in the ANZ export intentions survey.

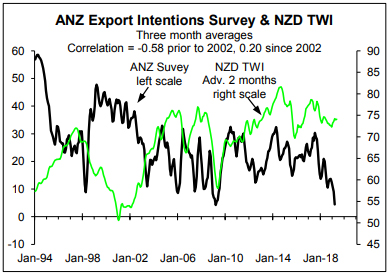

Prior to the ANZ business survey in general starting to show political bias in 2002, the NZ dollar trade weighted exchange rate index (NZD TWI) was a somewhat useful leading indicator of the ANZ export survey with the peak correlation at a modest -0.58 being with it leading by two months (the previous chart). Since 2002 the correlation is mildly positive at 0.20 rather than negative. Other factors will have impacted but it appears that this survey has had problems for a long time.

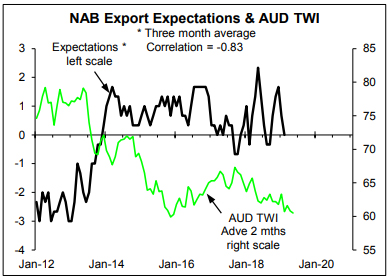

Zollner also wrote: "Sharply lower export intentions despite a well-behaved exchange rate suggest global factors are a part of it …". The chart below is an Australian equivalent of the previous NZ chart but it can only be backdated to 2012 because I don't have data for the NAB export expectations survey prior to then. The lack of data means the chart should be interpreted with caution. But it shows a high negative correlation with the AUD TWI leading the NAB export survey by two months.

Australia will have been impacted by adverse global developments similar to NZ. This is probably why the NAB survey isn't somewhat higher than it has been recently in light of the lower AUD TWI. I doubt the NAB survey has been corrupted by political bias in the way the ANZ NZ one has, making it a useful benchmark for assessing the extent global factors account for the extremely low level of the ANZ NZ export survey. The answer seems to be a bit but the main explanation is political bias. It only takes a small amount of research to reveal why the ANZ export survey is so low.

In her favour, Zollner noted that "The data [i.e. the ANZ export survey] has not always been a good indicator for 'discretionary' exports ie non-food manufactured exports …", but she goes on to conclude that "all up, the signal should certainly not be dismissed out of hand". To some extent this quotes Zollner out of context, like the selective use of time periods in some charts in the latest ANZ report. The commentary on the results of the ANZ export survey lacks quality, supporting analysis. But there is nothing new in this; again, Zollner inherited a tradition of low quality commentary/analysis.

This article is re-posted here with permission. The original is here.

9 Comments

Sorry Rodney but I don't agree, the bulk of respondents will "tell it how it is". Inevitably sentiment will likely track (actual) activity, with some sort of lag. Anz to a degree have a level of self - interest in the findings as the boffins there will modify internal credit policies based on the outcome. The only ones trying to talk up the market are the government of the day, and reporters.

I find your evidence rather less compelling than Rodneys

"Sorry Rodney but I don't agree, the bulk of respondents will "tell it how it is"."

You're completely denying the evidence presented that says that that's not "how it is".

Its unusual for an economist to be so blunt about other members of the family , but I do agree that there does seem to be some bias in commentaries .

That said , I have my doubts that its intentionally designed to mislead .

As Rodney will know , in research departments in the big banks there is a whole team psychology at play there tends to be a dominant person who dominates opinion or lead ideas , and there develops an element of "groupthink" within the department where the team members feed off each other, or pick up their cues from the person they see as the master .

The researchers may then go looking for evidence to support the narrative .

I may be wrong .

I have found that anecdotally, many of the clients using our practice are very very nagative towards the coalition , they simply dont trust them one bit, and lets face it these are business leaders who make business decisions that cumulatively affect the economy .

At worst they are fearful of a return to the labour relations of the 1970's and early 80's , they dont like all this talk about numerous new and additional taxes , they dont trust the PM not to walk out of a cabinet meeting and blindside everyone by simply banning a whole industry without consultation , or even giving a clue beforehand

And there is little doubt that cracks are appearing in the construction sector , I am seeing a raft of insolvencies and losses reported , and many tradies are struggling to get paid by developers, wages are too high , and the builders supply industry is a rort .

Just the talk of Capital Gains tax has been enough to quell the property market , and this will affect developers who cannot sell their stock

Is that rant deliberately supportive of the article?

"the ANZ business survey in general has been hugely corrupted by political gamesmanship"

Or it's just the actual opinions of people finding themselves lumped with more and more costs in an environment where they are unable to recover it from customers.

Now, do these people know what they're talking about? Probably not - the actual experts have a fairly chequered record in this regard so what hope does your average director - but they are the ones who set wages and pay increases for the workforce who aren't on minimum wage. And in that regard, I find the idea of writing off their views as a political exercise somewhat of a political exercise itself. Whether they are wrong or not, this sentiment has a flow-on effect.

Outstanding.

I just don't rate economists, full stop.

Great article. I'm wonder if its not actually intentional gamesmanship on behalf of responders, but may well be the disproportionate influence business lobby groups have on the mainstream media. Very compelling evidence that the effect is real, irrespective of the underyling cause.

We welcome your comments below. If you are not already registered, please register to comment

Remember we welcome robust, respectful and insightful debate. We don't welcome abusive or defamatory comments and will de-register those repeatedly making such comments. Our current comment policy is here.